Re: WIP: Upper planner pathification

| From: | Tom Lane <tgl(at)sss(dot)pgh(dot)pa(dot)us> |

|---|---|

| To: | Robert Haas <robertmhaas(at)gmail(dot)com> |

| Cc: | "pgsql-hackers(at)postgresql(dot)org" <pgsql-hackers(at)postgresql(dot)org> |

| Subject: | Re: WIP: Upper planner pathification |

| Date: | 2016-03-05 18:09:25 |

| Message-ID: | 21211.1457201365@sss.pgh.pa.us |

| Views: | Whole Thread | Raw Message | Download mbox | Resend email |

| Thread: | |

| Lists: | pgsql-hackers |

I wrote:

> Robert Haas <robertmhaas(at)gmail(dot)com> writes:

>> One idea might be to run a whole bunch of queries and record all of

>> the planning times, and then run them all again and compare somehow.

>> Maybe the regression tests, for example.

> That sounds like something we could do pretty easily, though interpreting

> the results might be nontrivial.

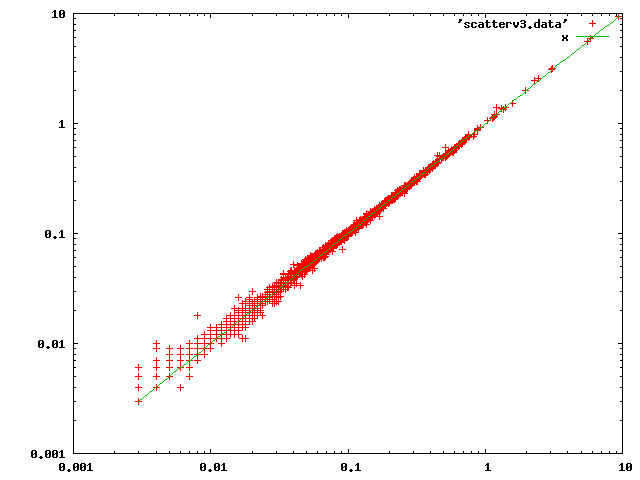

I spent some time on this project. I modified the code to log the runtime

of standard_planner along with decompiled text of the passed-in query

tree. I then ran the regression tests ten times with cassert-off builds

of both current HEAD and HEAD+pathification patch, and grouped all the

numbers for log entries with identical texts. (FYI, there are around

10000 distinguishable queries in the current tests, most planned only

once or twice, but some as many as 2900 times.) I had intended to look

at the averages within each group, but that was awfully noisy; I ended up

looking at the minimum times, after discarding a few groups with

particularly awful standard deviations. I theorize that a substantial

part of the variation in the runtime depends on whether catalog entries

consulted by the planner have been sucked into syscache or not, and thus

that using the minimum is a reasonable way to eliminate cache-loading

effects, which surely ought not be considered in this comparison.

Here is a scatter plot, on log axes, of planning times in milliseconds

with HEAD (x axis) vs those with patch (y axis):

| Attachment | Content-Type | Size |

|---|---|---|

|

image/png | 4.7 KB |

| unknown_filename | text/plain | 2.1 KB |

|

image/png | 4.6 KB |

| unknown_filename | text/plain | 364 bytes |

In response to

- Re: WIP: Upper planner pathification at 2016-03-03 20:52:26 from Tom Lane

Responses

- Re: WIP: Upper planner pathification at 2016-03-05 18:32:58 from Greg Stark

- Re: WIP: Upper planner pathification at 2016-03-08 17:18:40 from Tom Lane

Browse pgsql-hackers by date

| From | Date | Subject | |

|---|---|---|---|

| Next Message | Michael Paquier | 2016-03-05 18:31:09 | Re: VS 2015 support in src/tools/msvc |

| Previous Message | Tom Lane | 2016-03-05 16:41:27 | Re: WIP: Upper planner pathification |